Ali-Gombe, A., & Elyan, E. (2019). MFC-GAN: class-imbalanced dataset classification using multiple fake class generative adversarial network. Neurocomputing, 361, 212-221.

In Short

Data Geneation(Augmentation) with Multiclass Fakes

1. Introduction

Since both minority and majority classes come from the same distribution, these classes share some common features.

=> Features learned from majority classes should aid in learning the minority classes.

=> Class conditioned generation will focus the model into sampling minority classes.

2. Related Works

2-1. Mutliclass에 대한 관심 부족

binary classification에 대해서는 연구가 많이 이루어졌지만, 이에 비해 multiclass classification에는 상대적으로 관심이 적었다. 그럼에도 이와 관련된 선행연구들이 있긴 한데, 예를 들어서 multi-class decomposition, Class Rectification Loss(CRL), mean squared false error 등이 있다.

- CRL: it performs hard mining of the minority class is each each batch forcing the model to create a boundary for each minority class with a hard positive and negative threshold.

- LMLE(Large Margin Local Embedding): it employs clustering among classes to maintain the structure of the minroity data.

=> 하지만 위의 두 방법론은 데이터가 클 경우 계산량이 너무 많아진다는 단점들이 있다.

2-2. SMOTE

SMOTE와 같이 기존에 유명한 방식들은 극단적으로 불균형인 데이터에 대해서는 비효율적이라고 알려져있다.

2-3. GAN의 발전

minority class data를 생성하기 위해서 C-GAN(Conditional GAN)이 잠재적 해결방안이 될 수는 있지만, 완벽하지는 않다. 이처럼 vanilla GAN이나 AC-GAN들도 데이터가 불균형한 상태에서는 minority sample을 생성하는 데에 있어서 효과적이지 못했다. DCGAN이나 MelanoGAN은 효과적이기는 했으나, multiclass가 아닌 binary case에서였다.

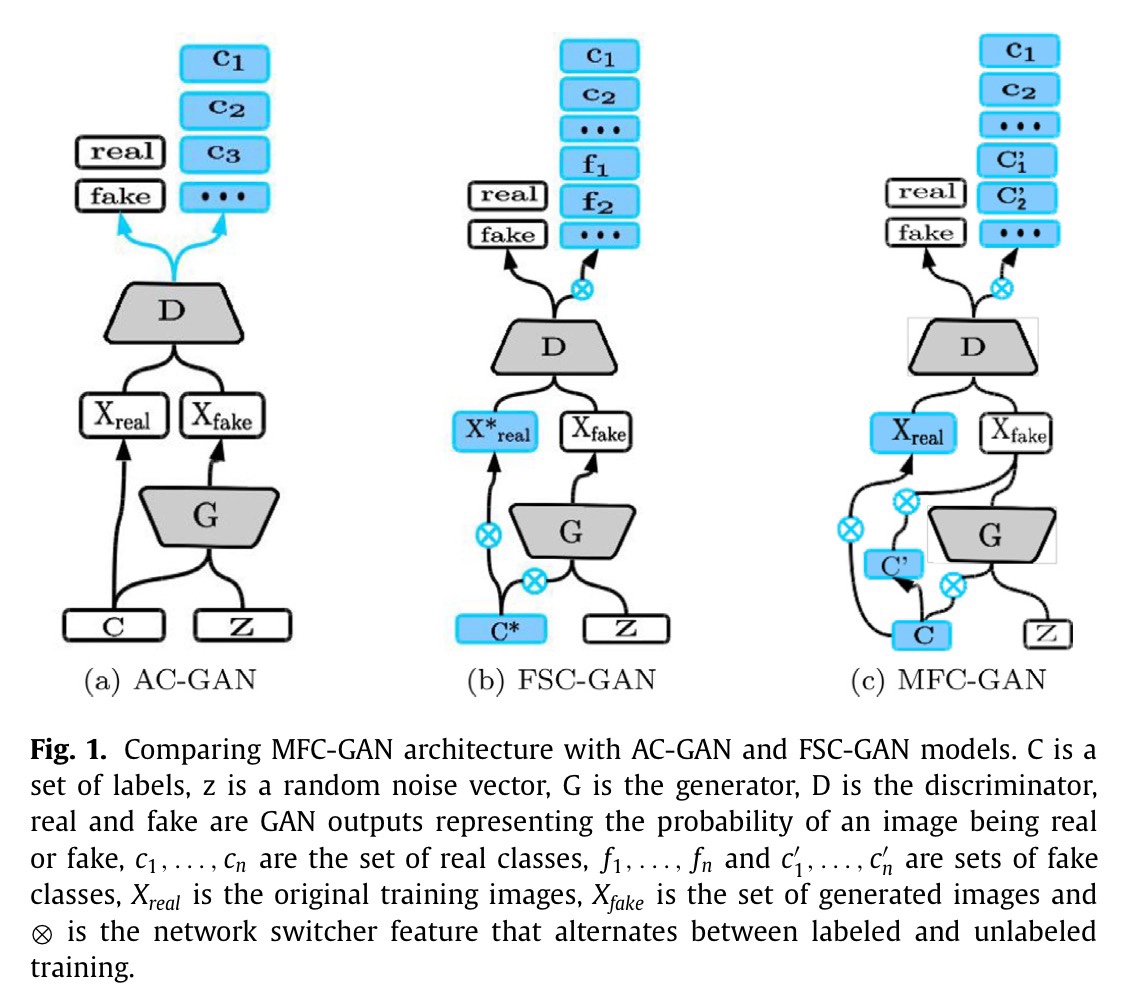

3. Method

GAN은 기본적으로 두 가지 종류의 학습데이터를 사용한다. 하나는 기존의 학습데이터에 있는 원본데이터이고, 나머지 하나는 Generator가 생성한 샘플들(fake)이다.

10개의 클래스가 있다고 했을 때, 원래는 one-hot encoding 방식으로 1000000000로 표시했다. 하지만 여기서는 fake image에 대한 label도 따로 신경써준다. 그렇기 때문에 real image는 10000000000000000000로 표시하고, 이에 해당하는 fake image의 label은 00000000001000000000로 표시한다.

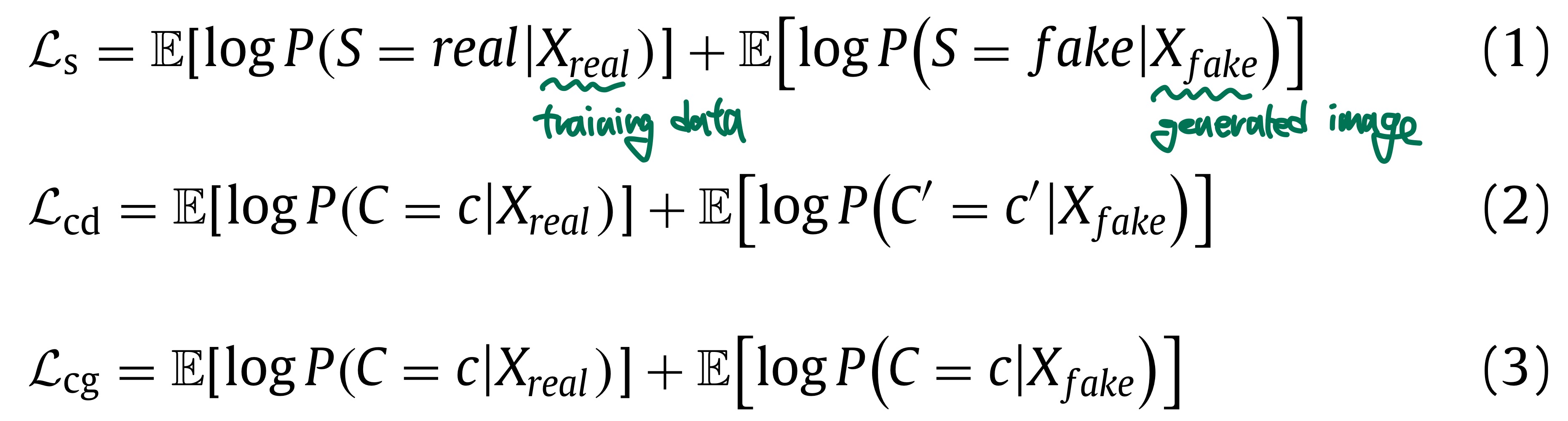

3-1. Objective Function

$L_s$: to estimate the sampling loss, which represents the probability of the sample being real or fake

$L_{cd}$: to estimate the classification loss over the discriminator

$L_{cg}$: to estimate the classification loss over the generator

$L_{cd}$ means that the discriminator classifies samples as real or fake with associated class

$L_{cg}$ means that the generator classifies fake samples as real classes

generator: to maximize the difference of $L_s$ and $L_{cg}$

discriminator: to maximize the sum of $L_s$ and $L_{cd}$

MFC-GAN generator is sampled using a noise vector conditioned on real class labels

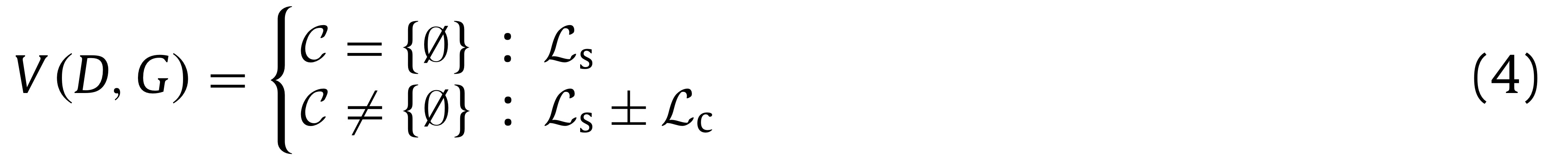

label이 없는 경우에는 위와 같이 Vanilla GAN처럼 작동하게 된다.

본 논문의 핵심은 discriminator이다. 가령, AC-GAN의 discriminator는

$L_{cd}$가 아니라 $L_{cg}$와 $L_s$의 합을 최대화하는 것이 목표이다.3-2. MFC-GAN vs. FSC-GAN

MFC-GAN: generator is penalized according to how far the generated sample is from the real class label

FSC-GAN: generator is penalized according to how far the generated sample is from the fake class label

=> this promoted early convergence of MFC-GAN

4. Experiments

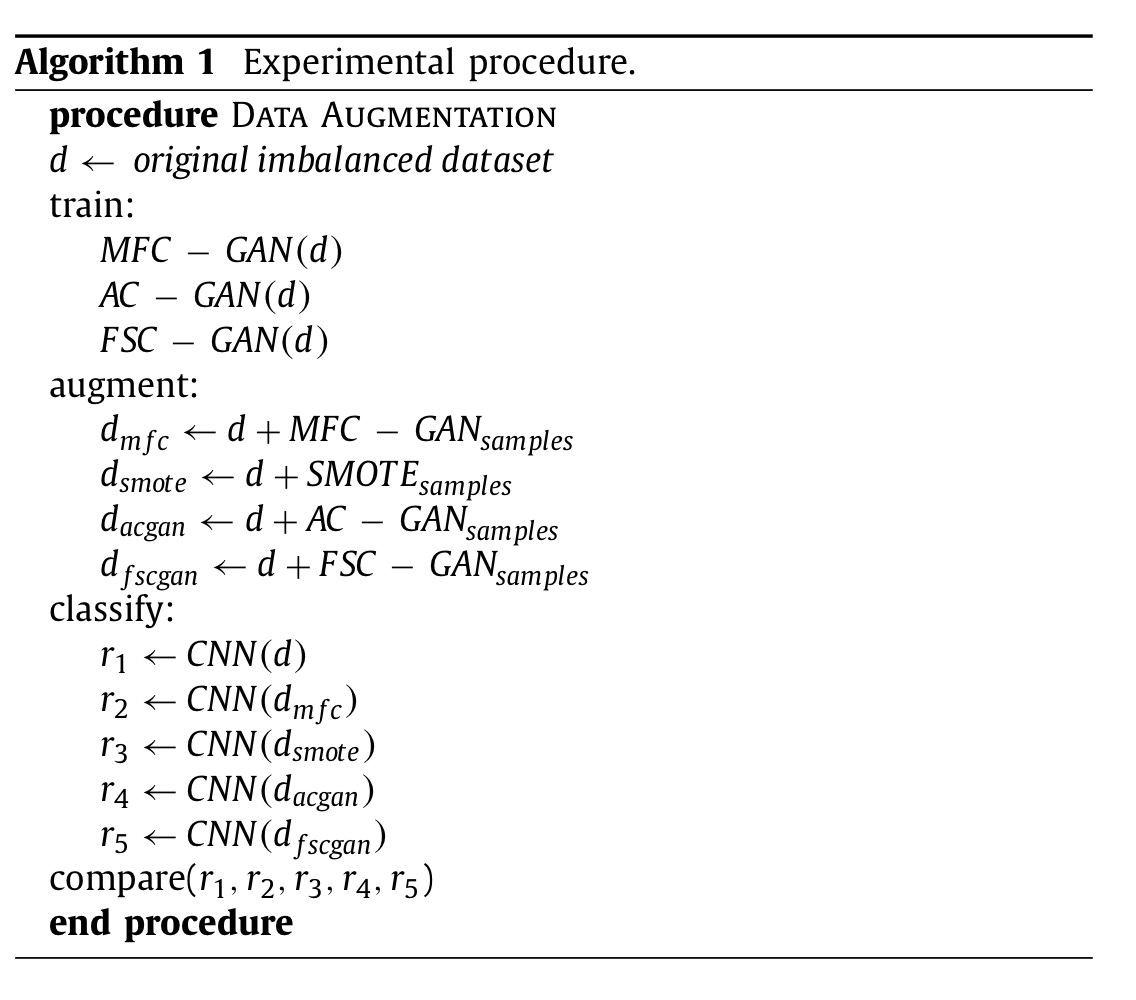

4-1. Experimental set-up

- 라이브러리:

tensorflow 1.0,Keras 2.0 - 비교모델: SMOTE, AC-GAN, FSC-GAN, 원데이터

- 공통 분류분석기: CNN

- 결과비교기준:

- 주관적: plausibility of sample(i.e., visual inspection)

- 객관적: 다양한 지표들을 통해 분류결과 성능 비교

4-2. Dataset

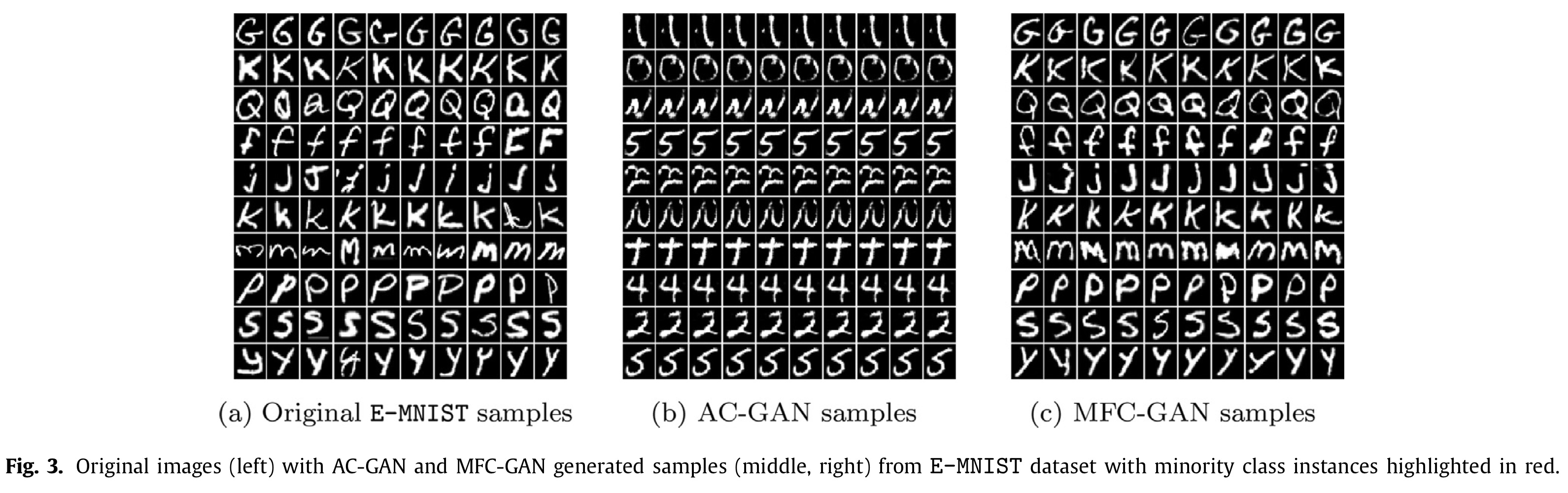

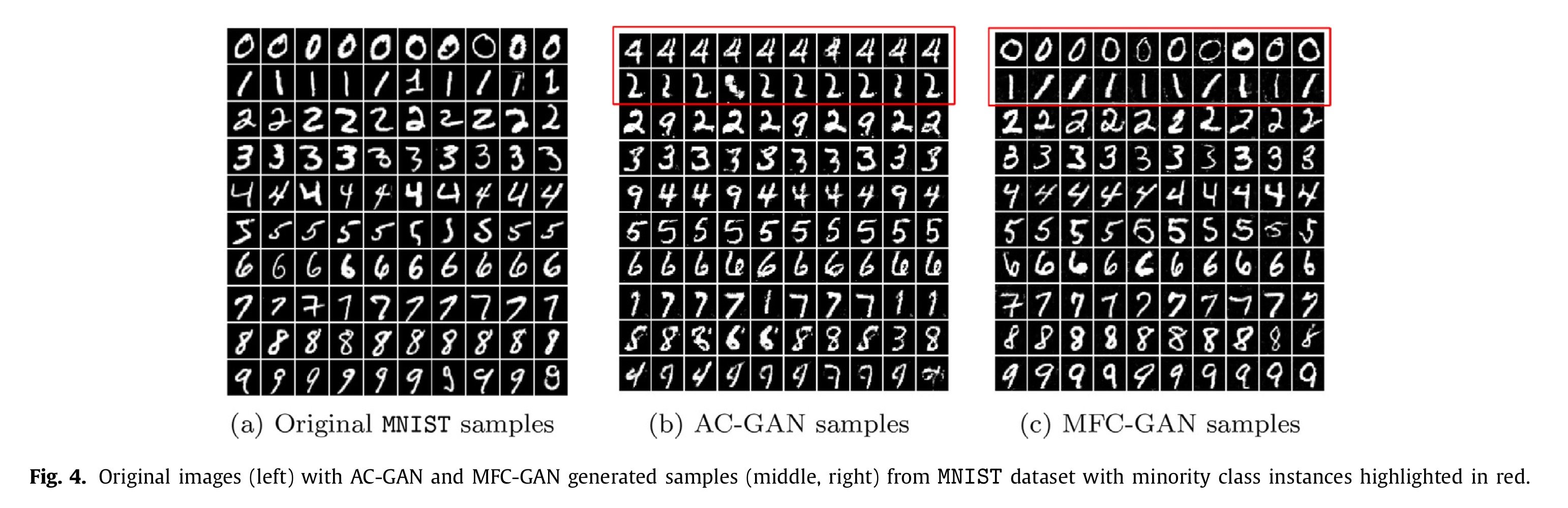

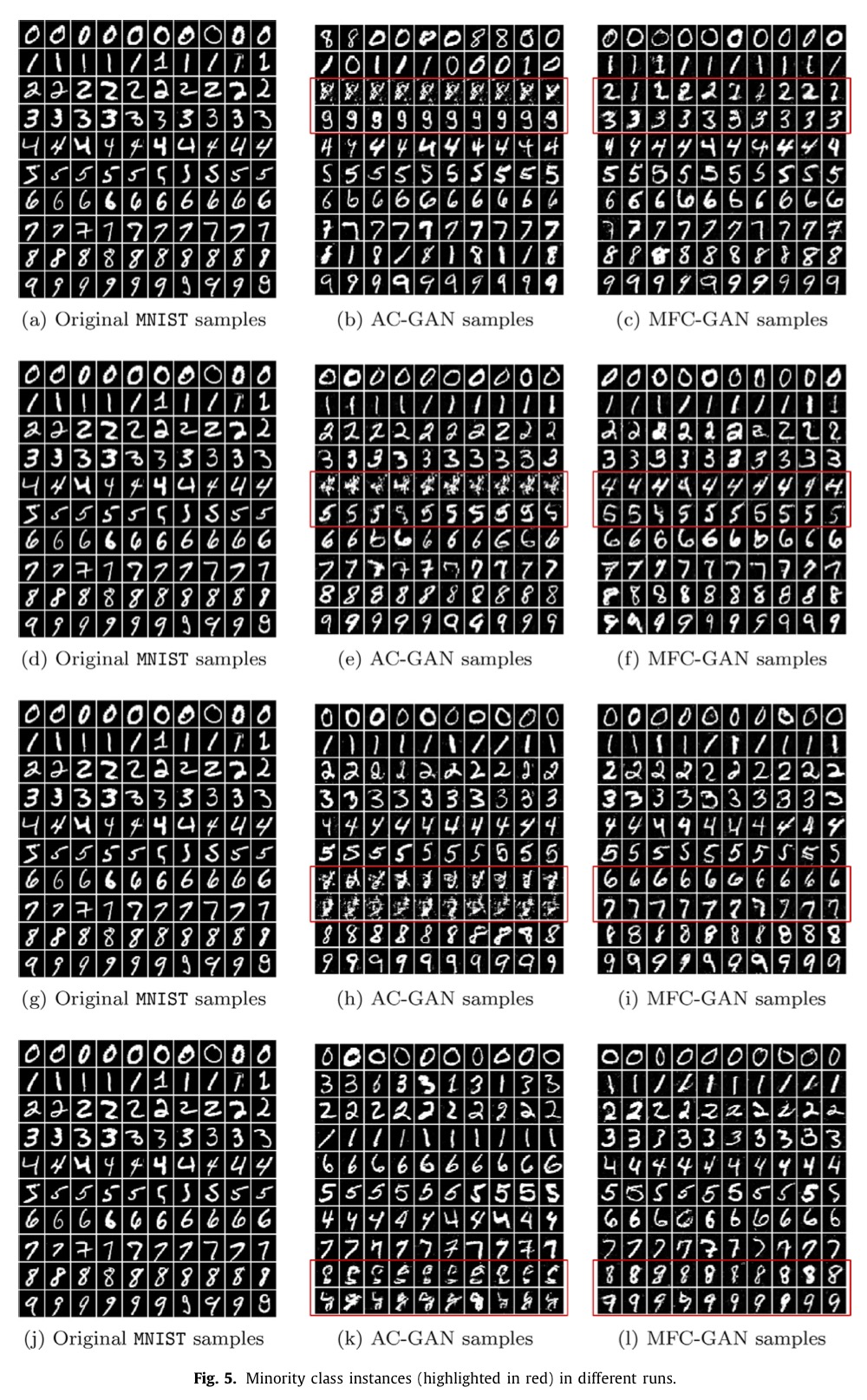

데이터: MNIST, E-MNIST, SVHN, CIFAR-10

- 대부분 강제로 imbalanced data로 만들어주고나서 활용함(특정 클래스 임의로 선택 후 undersampling)

MNIST: 2개의 클래스를 임의로 골라 원데이터의 1%(50개)씩만 활용 (run마다 다른 클래스 선택)E-MNIST: 총 81만 여개 샘플에서, 62개 클래스 중 21개의 클래스에 해당하는 샘플들이 각각 3000개 이하이다. 즉, 이미 imbalanced이다. 여기서 가장 적은 10개의 클래스를 활용하였다. (G, K, Q, f, j, k, m, p, s, y)SVHN: MNIST처럼 2개의 클래스에서 50개씩만 사용하여 강제로 imbalanced를 만들어주었다. (1,2)CIFAR-10: 10개 클래스 중 두 개의 클래스에서 1000개 중에서 50개씩만 활용하였다. 즉, 강제로 imbalanced를 만들어주었다. (Aeroplane, Automobile)

MNIST와 E-MNIST의 경우에는 FSC-GAN의 구조를 차용하였고, SVHN과 CIFAR-10에서는 AC-GAN의 구조를 사용하였다. 그리고, AC-GAN, FSC-GAN, MFC-GAN 모두의 경우에 공정한 비교를 위해 spectral weight normalization을 생성기와 분류기 모두에 추가해주었다.

4-3. Image Generation

- network switcher의 의미

Depending on the availability of labels, the network switcher feature enables both models to alternate between two training modes. The switcher is a piece-wise function that oscillates between supervised and unsupervised training.

4-4. Image Classification

CNN을 공통 분류분석기로 활용하였다.

- MNIST, E-MNIST, SVHN: 3 layers with softmax activation layer (2 convolution layers, 3x3 kernels with 2x2 max-pooling, two filter maps, fully connected layer), ReLuactivated, 0.5 dropout ratio, Adadelta optimizer

- CIFAR-10: 3 convolution layers, 0.2 dropout ratio, SGD optimizer

5. Results

-

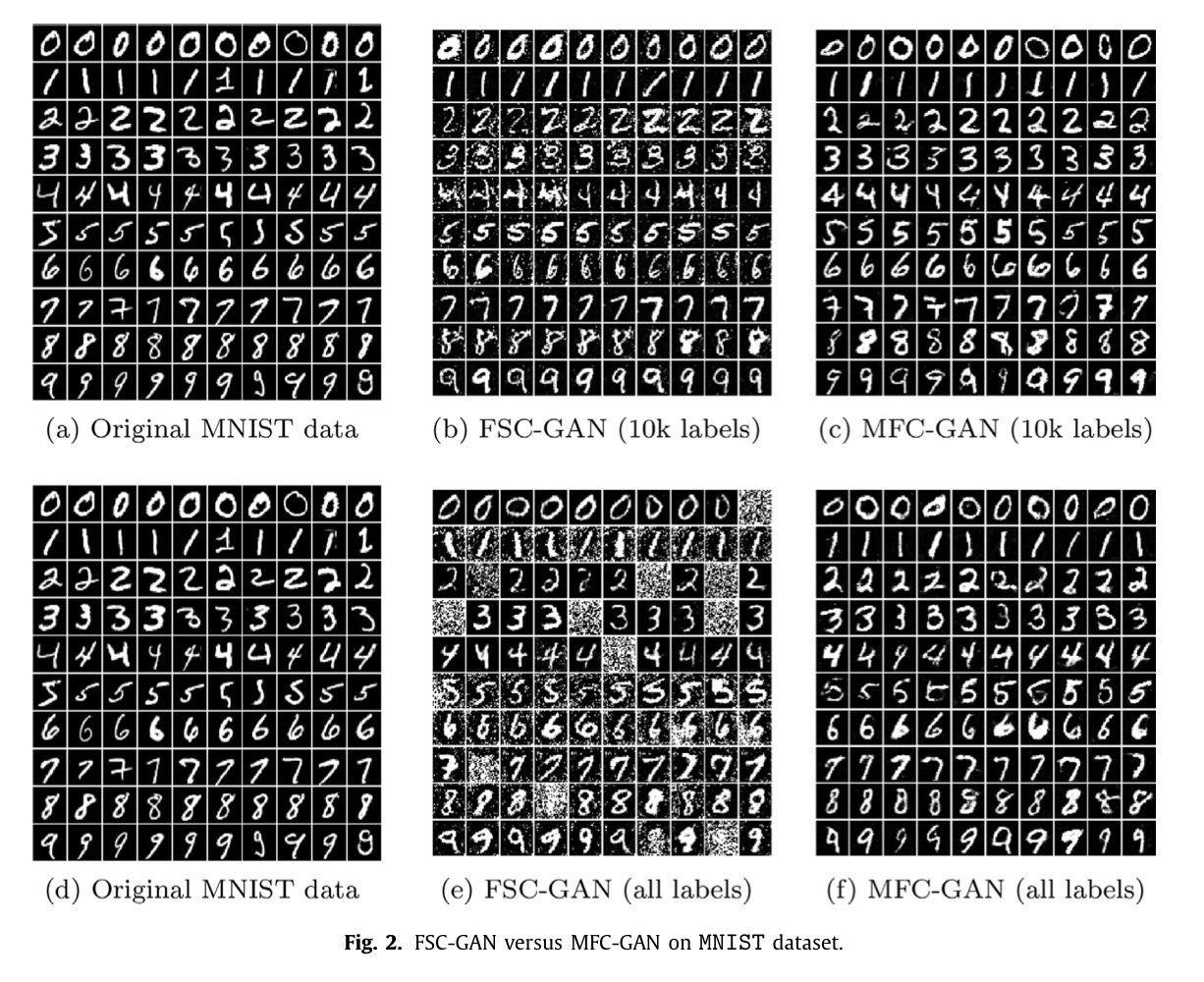

생성된 사진의 퀄리티가 우수함

딱 보더라도 MFC-GAN이 훨씬 우수한 성능으로 이미지를 만들어냄을 눈으로 확인할 수 있다. 특히 (c)를 보면, unlabeled가 5만개였는데도 성능이 괜찮게 나왔다. -

컴퓨팅 효율성

FSC-GAN은 500 epoch가 필요한 데에 비해, MFC-GAN은 50 epoch만을 필요로 했다. 즉, data augmentation에는 MFC-GAN이 보다 우수함을 알 수 있었다. -

객관적 성능지표에서도 우수함

MFC-GAN에서 민감도, balanced accuracy, G-mean 모두 높게 나타났다. 이에 반해,FSC-GAN은 모든 경우에서 성능 향상으로 이어지지 않았다. 특정 상황에서는SMOTE가 더 우수한 듯 보이기는 했지만, 이는 단순히 소수 집단에 대해 샘플이 더 많기 때문일 거라고 추측된다. 왜냐하면 샘플 수가 적은 클래스에 대해서는 성능이 안 좋은 것이 확인되었기 때문이다.

6. Discussion

The fidelity and diversity of MFC-GAN minority samples made classification easier for the CNN. The diversity of generated samples indicates no sign of mode collapse in the model.

한계점 1. CIFAR-10에서의 부족한 성능

Table3에서 알 수 있듯이, CIFAR-10에서는 모든 모델들이 성능이 좋지 않게 나타났다. 이는 32x32라는 작은 이미지 사이즈에 비해 많은 정보량이 들어가있는 분류작업이기 때문이라고 보인다. (그래도 그중에서 그나마 MFC-GAN이 낫기는 했다.)

한계점2. 특정 클래스에서의 부족한 성능

특정 클래스에 대해서는 모든 모델들이 모두 성능이 안 좋게 나타났다. E-MNIST의 경우에는 m과 s가 이에 해당한다. 이는 s가 5, S, 2, z와 비슷하기 때문이라고 저자들은 보고 있다.

—

Critical Point (MY OWN OPINION)

-

E-MINST, SVHN, CIFAR-10에서 다른 클래스가 minority class로서 선정이 되었다면 어떻게 결과가 달라졌을지 알 수가 없다. 선택된 클래스의 특성에 따른 결과가 아니라고 결론 지을 수가 없다는 한계점이 있다.

-

기본 CNN 모형 말고 ResNet나 YOLO와 같이 다른 architecture를 사용했으면 어땠을까라는 생각이 있다.

—

참고사이트

[1] Spectral Normalization(1)

[2] Spectral Normalization(2)